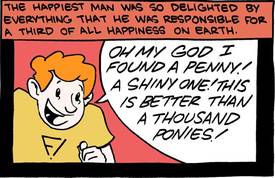

A characteristically great Saturday Morning Breakfast Cereal installment explores the hidden pitfalls of extreme utilitarianism. I just re-read Starship Troopers and was once again struck by Heinlein's strange idea of a scientifically provable "moral philosophy" that puts every human situation to the test of being expressed in symbolic logic to weigh its validity.

We created a utilitarian ethics computer to replace government

(Thanks, Jamie!)